An interesting report of Intentional Futures on the role, workflow, and experience of instructional designers:

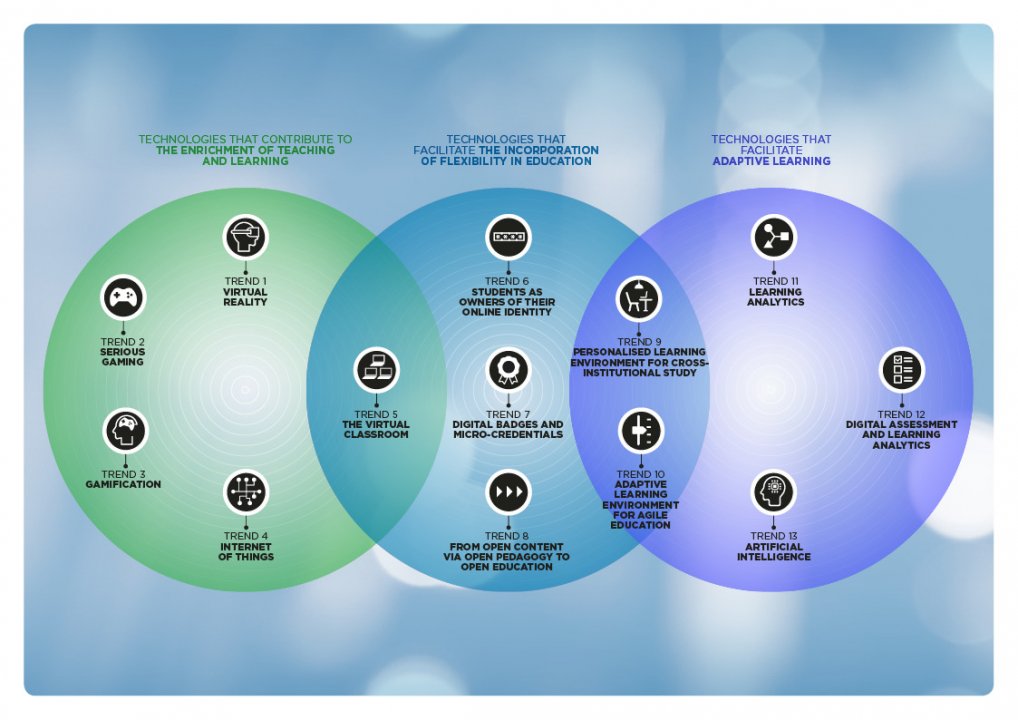

There are many technologies flooding the market that help foster innovative teaching and learning. These tools, such as learning management systems, lecture capture systems, simulation creators, authoring, and video and audio tools, have flooded into the classrooms and lecture halls of higher education. However, the inference that these innovative tools aid learning should not be immediately assumed. With faculties’ full work load, learning and implementing new and often complex tools to improve their online pedagogy isn’t a priority. In fact, as the needs and tools of institutions have evolved, instructional designers have positioned themselves as pivotal players in the design and delivery of learning experiences. Instructional designers exist to bridge the gap between faculty instruction and student online learning. But who, exactly, are instructional designers? What do they do? Where do they fit in higher education?

Their findings:

- Instructional designers number at least 13,000 in the U.S alone.

- They are highly and diversely qualified.

- Contrary to popular belief, they do more than just design instruction.

- Above all, they struggle to collaborate with faculty.

- One thing is certain: instructional designers are dedicated to improving learning with technology

I recognise their findings if I look at my team and their activities.

Highly and diversely qualified

What they name "instructional designer" we separated it in two different job titles:

- learning developer: focused on creating the learning design of a course (constructive alignment) and often acting as project manager

- instructional designer: expert in how to setup the learning design in the a specific platform (we have (open)edX, Blackboard and Brightspace.

All the learning developers have some form of educational science background. From a master in educational science to experience as lecturer with additional courses. The instructional designer have a much more technical background, one of my instructional designer is a watermanagement engineer. They all have finished a Bachelor and Master and some even have a PhD in education.

They do more than just design instruction

According to the report most instructional designers have four categories of responsibilities:

- Design instructional materials and courses, particularly for digital delivery

- Manage the efforts of faculty, administration, IT, other instructional designers, and others to achieve better student learning

- Train faculty to leverage technology and implement pedagogy effectively

- Support faculty when they run into technical or instructional challenges

The activities of my team perfectly aligns with 4 categories. Although sometimes they do even more, such as marketing, administration, policy advise.

Struggle to collaborate with faculty

With every course team an instructional designer has to win the trust of the faculty members. Faculty members are the experts on the topic of the course and have been teaching for many years. They have to accept that designing and delivering an online or blended course is a different cup of tea than classroom teaching. The instructional designer have been done this for many courses, so they know how to design an online course.

Dedicated to improving learning with technology

Despite the struggle with faculty they are always dedicated to make it work. Sometimes it means that the end result is not the best, but it was what could be reached within the time span and context of that course.

Recommendations

In the report there are a few recommendations. Two I want to strongly emphasize:

- Institutional leaders and administration - Involve instructional designers early, often, and throughout your technology transition. Develop clear standards that are expressed to all participants — institutional leaders, instructional designers, faculty, and students. Also, think about incentivising faculty to work with instructional designers from the get-go. Survey respondents made clear that they’d like more resources allocated to their work, which could have a high return on investment in terms of student success.

- Faculty - We know student success is top priority for you. An instructional designer can help you engage your students and give you more class time by using online tools. There is potential impact to be made for your students by collaborating and using new technologies that instructional designers can guide you to. They share your goal and want to see you shine for your students.